Detection

CNN base 감지 모델 사용

검토 모델 : ACF, FrRCNN(ZF), FrRCNN(VGG16)

FrRCNN(VGG16) -> Detection(Recall, Precision), Tracking(ID switching, MOTA) 항목에서 가장 나은 성능을 보임

Estimation Model

State of each target

Bounding Box의 Geometry

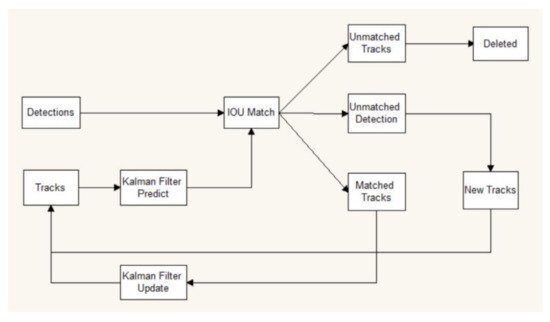

Data Association

새로운 Detected object를 기존의 target에 할당

1. 기존 target의 BB geometry는 현재 frame에서 새로운 위치를 예측하여 추정

2. 기존 target으로 예측된 모든 BB와 각 detected object 사이의 IOU distance로 assignment cost matrix 계산 (IOUmin을 설정해서 IOU가 임계치보다 작으면 assignment에서 제외)

3. Hungarian algorithm으로 assignment problem 해결

Creation and Deletion of Track Indentities

Detected object가 이미지에 포함됨에 따라 고유 ID가 생성/삭제 되어야함

IOUmin 보다 적은 중첩이 있는 감지도 추적되지 않은 객체의 존재를 나타내기 위해 모두 고려 -> 속도가 0인 BB로 초기화 -> 속도 성분의 공분산은 불확실성을 반영하여 큰 값으로 초기화됨

오탐 추적을 방지하기 위해 일정기간의 유예기간을 거침 -> TLost 프레임동안 감지되지 않으면 Track 종료

# sort.py start point

if __name__ == '__main__':

# all train

args = parse_args()

display = args.display

phase = args.phase

total_time = 0.0

total_frames = 0

colours = np.random.rand(32, 3) #used only for display

if(display):

if not os.path.exists('mot_benchmark'):

print('\n\tERROR: mot_benchmark link not found!\n\n Create a symbolic link to the MOT benchmark\n (https://motchallenge.net/data/2D_MOT_2015/#download). E.g.:\n\n $ ln -s /path/to/MOT2015_challenge/2DMOT2015 mot_benchmark\n\n')

exit()

plt.ion()

fig = plt.figure()

ax1 = fig.add_subplot(111, aspect='equal')

if not os.path.exists('output'):

os.makedirs('output')

pattern = os.path.join(args.seq_path, phase, '*', 'det', 'det.txt')

for seq_dets_fn in glob.glob(pattern):

mot_tracker = Sort(max_age=args.max_age,

min_hits=args.min_hits,

iou_threshold=args.iou_threshold) #create instance of the SORT tracker

seq_dets = np.loadtxt(seq_dets_fn, delimiter=',')

seq = seq_dets_fn[pattern.find('*'):].split(os.path.sep)[0]

with open(os.path.join('output', '%s.txt'%(seq)),'w') as out_file:

print("Processing %s."%(seq))

for frame in range(int(seq_dets[:,0].max())):

frame += 1 #detection and frame numbers begin at 1

dets = seq_dets[seq_dets[:, 0]==frame, 2:7]

dets[:, 2:4] += dets[:, 0:2] #convert to [x1,y1,w,h] to [x1,y1,x2,y2]

total_frames += 1

if(display):

fn = os.path.join('mot_benchmark', phase, seq, 'img1', '%06d.jpg'%(frame))

im =io.imread(fn)

ax1.imshow(im)

plt.title(seq + ' Tracked Targets')

start_time = time.time()

trackers = mot_tracker.update(dets) #Multiple Object Tracking Track 업데이트

cycle_time = time.time() - start_time

total_time += cycle_time

for d in trackers:

print('%d,%d,%.2f,%.2f,%.2f,%.2f,1,-1,-1,-1'%(frame,d[4],d[0],d[1],d[2]-d[0],d[3]-d[1]),file=out_file)

if(display):

d = d.astype(np.int32)

ax1.add_patch(patches.Rectangle((d[0],d[1]),d[2]-d[0],d[3]-d[1],fill=False,lw=3,ec=colours[d[4]%32,:]))

if(display):

fig.canvas.flush_events()

plt.draw()

ax1.cla()

print("Total Tracking took: %.3f seconds for %d frames or %.1f FPS" % (total_time, total_frames, total_frames / total_time))

if(display):

print("Note: to get real runtime results run without the option: --display")

# Kalman filter로 target의 state를 predict, update, track에 할당

class KalmanBoxTracker(object):

"""

This class represents the internal state of individual tracked objects observed as bbox.

"""

count = 0

def __init__(self,bbox):

"""

Initialises a tracker using initial bounding box.

"""

#define constant velocity model

self.kf = KalmanFilter(dim_x=7, dim_z=4)

self.kf.F = np.array([[1,0,0,0,1,0,0],[0,1,0,0,0,1,0],[0,0,1,0,0,0,1],[0,0,0,1,0,0,0], [0,0,0,0,1,0,0],[0,0,0,0,0,1,0],[0,0,0,0,0,0,1]])

self.kf.H = np.array([[1,0,0,0,0,0,0],[0,1,0,0,0,0,0],[0,0,1,0,0,0,0],[0,0,0,1,0,0,0]])

self.kf.R[2:,2:] *= 10.

self.kf.P[4:,4:] *= 1000. #give high uncertainty to the unobservable initial velocities

self.kf.P *= 10.

self.kf.Q[-1,-1] *= 0.01

self.kf.Q[4:,4:] *= 0.01

self.kf.x[:4] = convert_bbox_to_z(bbox)

self.time_since_update = 0

self.id = KalmanBoxTracker.count

KalmanBoxTracker.count += 1

self.history = []

self.hits = 0

self.hit_streak = 0

self.age = 0

def update(self,bbox):

"""

Updates the state vector with observed bbox.

"""

self.time_since_update = 0

self.history = []

self.hits += 1

self.hit_streak += 1

self.kf.update(convert_bbox_to_z(bbox))

def predict(self):

"""

Advances the state vector and returns the predicted bounding box estimate.

"""

if((self.kf.x[6]+self.kf.x[2])<=0):

self.kf.x[6] *= 0.0

self.kf.predict()

self.age += 1

if(self.time_since_update>0):

self.hit_streak = 0

self.time_since_update += 1

self.history.append(convert_x_to_bbox(self.kf.x))

return self.history[-1]

def get_state(self):

"""

Returns the current bounding box estimate.

"""

return convert_x_to_bbox(self.kf.x)

def associate_detections_to_trackers(detections,trackers,iou_threshold = 0.3):

"""

Assigns detections to tracked object (both represented as bounding boxes)

Returns 3 lists of matches, unmatched_detections and unmatched_trackers

"""

if(len(trackers)==0):

return np.empty((0,2),dtype=int), np.arange(len(detections)), np.empty((0,5),dtype=int)

iou_matrix = iou_batch(detections, trackers)

if min(iou_matrix.shape) > 0:

a = (iou_matrix > iou_threshold).astype(np.int32)

if a.sum(1).max() == 1 and a.sum(0).max() == 1:

matched_indices = np.stack(np.where(a), axis=1)

else:

matched_indices = linear_assignment(-iou_matrix)

else:

matched_indices = np.empty(shape=(0,2))

unmatched_detections = []

for d, det in enumerate(detections):

if(d not in matched_indices[:,0]):

unmatched_detections.append(d)

unmatched_trackers = []

for t, trk in enumerate(trackers):

if(t not in matched_indices[:,1]):

unmatched_trackers.append(t)

#filter out matched with low IOU

matches = []

for m in matched_indices:

if(iou_matrix[m[0], m[1]]<iou_threshold):

unmatched_detections.append(m[0])

unmatched_trackers.append(m[1])

else:

matches.append(m.reshape(1,2))

if(len(matches)==0):

matches = np.empty((0,2),dtype=int)

else:

matches = np.concatenate(matches,axis=0)

return matches, np.array(unmatched_detections), np.array(unmatched_trackers)

class Sort(object):

def __init__(self, max_age=1, min_hits=3, iou_threshold=0.3):

"""

Sets key parameters for SORT

"""

self.max_age = max_age

self.min_hits = min_hits

self.iou_threshold = iou_threshold

self.trackers = []

self.frame_count = 0

def update(self, dets=np.empty((0, 5))):

"""

Params:

dets - a numpy array of detections in the format [[x1,y1,x2,y2,score],[x1,y1,x2,y2,score],...]

Requires: this method must be called once for each frame even with empty detections (use np.empty((0, 5)) for frames without detections).

Returns the a similar array, where the last column is the object ID.

NOTE: The number of objects returned may differ from the number of detections provided.

"""

self.frame_count += 1

# get predicted locations from existing trackers.

trks = np.zeros((len(self.trackers), 5))

to_del = []

ret = []

for t, trk in enumerate(trks):

pos = self.trackers[t].predict()[0]

trk[:] = [pos[0], pos[1], pos[2], pos[3], 0]

if np.any(np.isnan(pos)):

to_del.append(t)

trks = np.ma.compress_rows(np.ma.masked_invalid(trks))

for t in reversed(to_del):

self.trackers.pop(t)

matched, unmatched_dets, unmatched_trks = associate_detections_to_trackers(dets,trks, self.iou_threshold)

# update matched trackers with assigned detections

for m in matched:

self.trackers[m[1]].update(dets[m[0], :])

# create and initialise new trackers for unmatched detections

for i in unmatched_dets:

trk = KalmanBoxTracker(dets[i,:])

self.trackers.append(trk)

i = len(self.trackers)

for trk in reversed(self.trackers):

d = trk.get_state()[0]

if (trk.time_since_update < 1) and (trk.hit_streak >= self.min_hits or self.frame_count <= self.min_hits):

ret.append(np.concatenate((d,[trk.id+1])).reshape(1,-1)) # +1 as MOT benchmark requires positive

i -= 1

# remove dead tracklet

if(trk.time_since_update > self.max_age):

self.trackers.pop(i)

if(len(ret)>0):

return np.concatenate(ret)

return np.empty((0,5))

REFERENCE

https://arxiv.org/pdf/1602.00763.pdf

https://github.com/abewley/sort

GitHub - abewley/sort: Simple, online, and realtime tracking of multiple objects in a video sequence.

Simple, online, and realtime tracking of multiple objects in a video sequence. - GitHub - abewley/sort: Simple, online, and realtime tracking of multiple objects in a video sequence.

github.com

DeepSORT, 제대로 이해하기

DeepSORT는 가장 널리 사용되고 있는 객체 추적 프레임워크 중 하나로, SORT(Simple Online and Realtime Tracking)을 보완 확장한 기술입니다. 📚 사전 지식 먼저 다룰 사전 지식은 DeepSORT에서 사용되는 기술

gngsn.tistory.com

728x90

'Computer Vision' 카테고리의 다른 글

| [OpenCV] cv2.VideoWriter로 동영상 생성하기 (0) | 2023.04.19 |

|---|---|

| [CV] Histogram of Oriented Gradients (0) | 2023.02.23 |

| [OpenCV] cv2.VideoCapture() RTSP Frame이 준비될때까지 기다리기(feat. chatGPT) (0) | 2022.12.22 |

| [Python / OpenCV] M1 Mac / VScode / opencv 설치하기 (0) | 2022.06.03 |